Assessment 101

Evaluation Expert Case Study1

Problem/Impetus

Student affairs (SA) professionals at James Madison University (JMU) are expected to demonstrate that their programs/events lead to student learning and development in important non-cognitive areas (e.g., intercultural competency). However, according to past needs assessment results, SA professionals report not having sufficient time, training, or leadership support to engage in learning outcomes assessment effectively. SA Assessment 101 was developed in 2018 to address the lack of training.

The Client: Center for Assessment & Research Studies (CARS)

The Ask: CARS seeks to determine the effectiveness of SA Assessment 101, a three-day workshop designed to impact the assessment knowledge, attitudes, and skills of current and future SA professionals.

Context

Background Information:

- SA Assessment 101 is attended by approximately 15-20 full-time professionals (voluntary attendance) and 10-15 graduate assistants/professionals-in-training (mandatory attendance)

- Workshop participants generally have minimal training/experience in learning outcomes assessment prior to their participation.

- The workshop includes a mix of lectures, activities, and projects designed to increase participants’ value of assessment, their knowledge of key assessment concepts, and their ability to engage in foundational assessment tasks (with support).

Plan/Solution

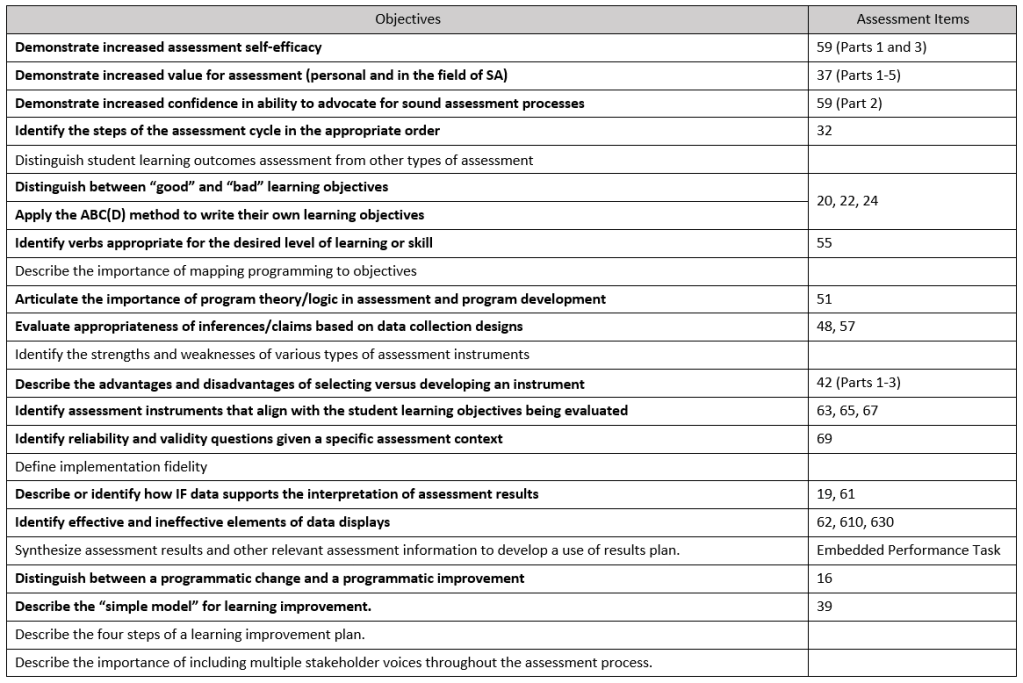

Step 1: Develop assessment measure aligned to key learning outcomes

A 20-item assessment measure containing a mix of Likert, multiple choice, and open response items was developed using an assessment blueprint to ensure alignment.

Step 2: Collect pre-post outcomes data and participant feedback

Participants completed the measure both before the workshop and immediately after. Feedback was also gathered through daily exit surveys and a focus group on Day 3.

Step 3: Transform results into meaningful insights that generate action.

Disaggregated results were combined with implementation integrity data, participant feedback, and item analysis/rater agreement results to inform modifications to both the workshop and assessment measure.

Process Highlights

Step 1: Develop assessment measure aligned to key learning outcomes

Key Tasks/Considerations:

- Specified a maximum assessment length by taking into account the number and complexity (Bloom’s taxonomy) of learning outcomes while also striving to minimize the burden on participants to encourage effortful responding.

- Determined which learning outcomes to assess based on their practical importance and the feasibility of generating appropriate items/tasks.

- Items developed to meet the following criteria:

- Realistic context (scenario-based items)

- Accessible language (no jargon)

- Aligned to the designated outcome with respect to content and cognitive complexity (e.g., “identify/distinguish” outcomes assessed with multiple-choice items while “describe” outcomes assessed with open response items)

Step 2: Collect pre-post outcomes data and participant feedback

Key Tasks/Considerations:

- Learning outcomes data were collected at two timepoints:

- Pre-test. A survey link was emailed to participants two weeks before the workshop to allow ample time for at-home completion. Laptops were also provided at the start of Day 1 (during breakfast) for those unable to complete the pre-test in advance.

- Post-test. Participants completed the post-test at the end of Day 3 on secure laptops with facilitator supervision.

- Participant feedback (on the workshop’s content, structure, and facilitation) was solicited through the following:

- Brief, anonymous surveys administered through the Socrative platform at the end of each day

- A 30-minute guided conversation with all participants at the end of Day 3

Step 3: Transform results into meaningful insights that generate action

Key Tasks/Considerations:

- Disaggregated Results. Results were analyzed separately for graduate students and full-time staff to allow for more meaningful

interpretation and encourage targeted action by relevant stakeholders (e.g., director of the student affairs master’s program).

- Scoring Procedure. For open response items, a team of two raters provided independent ratings using a detailed scoring rubric. In cases of disagreement, they engaged in an adjudication process to come to consensus. Items with low inter-rater reliability before adjudication were flagged for review. Additionally, multiple choice items with extremely high difficulty and/or low discrimination were flagged.

- Implementation Integrity. All workshop sessions were observed by at least one member of the training team not actively facilitating. Observers tracked the duration of each workshop component and made subjective evaluations of participant engagement and facilitator clarity/effectiveness. These data provided important context for interpreting assessment results and strengthened claims about the effectiveness of the workshop curriculum.

- Participant Feedback. While the outcomes and implementation integrity data were helpful for identifying what to improve, participant feedback was instrumental in determining how to improve. These qualitative data shaped improvement efforts by providing insight into specific barriers to participant engagement and understanding.

Key Results

What we found: While there was ample assessment training aimed at getting student affairs professionals to the novice level in most skills (i.e., able to apply their assessment knowledge to simplified or hypothetical examples), there was no training to support professionals in progressing to the intermediate level (able to apply their knowledge to real-life assessment projects).

Additionally, offerings that allowed for hands-on practice were provided infrequently and at high cost (financial/time) to individuals and the Division.

Next steps: A plan was developed to institute a cohort-based training model where learners meet regularly over the course of a year to design and implement real-life assessment projects within their offices while receiving timely, personalized instruction and feedback from CARS faculty/graduate students.

Lessons Learned

Implementation Successes

- High SME engagement and satisfaction with the process. Clear communication (and negotiation) with SMEs in advance about their roles during each phase and the anticipated time commitment facilitated a smoother process and enabled SMEs to budget sufficient time to engage thoughtfully.

- Strategic inclusion of diverse stakeholder voices. A diversity of stakeholders (from CARS experts and divisional leaders to on-the-ground student support staff) were strategically consulted at different points in the process based on when/where their feedback would be most valuable.

- Identification of “sneaky” training gaps. Gathering detailed information on existing training opportunities (e.g., cost, timing, reach) made it possible to identify instances where training existed, but was inaccessible (or even unknown) to those who needed it most.

Improvement/Growth Opportunities

- Use an impartial party to conduct focus group interview to prevent socially desirable responding.

- This case study describes work that took place in 2017-2018 and does not necessarily reflect current programming at JMU; descriptions of events/outcomes are subjective and written from my lens as Graduate Student Lead of the CARS Student Affairs Assessment Support Services Team. ↩︎