Uncovering the Real Problem

SME Whisperer Case Study

Problem/Impetus

Research demonstrates that holistic student support (i.e., support that proactive, personalized, and addresses students’ academic and non-academic needs) is one of the most effective interventions for increasing academic success and student retention. Accordingly, East West Community College (EWCC) has purchased the ISSAQ Student Survey, a measure of incoming students’ noncognitive skills (e.g., organization, sense of belonging, persistence) in the hopes of using the data to provide more holistic support for first-year students.

Five years later, however, only 5-10% of incoming students complete the ISSAQ survey each semester. Furthermore, faculty/staff in the First-Year Experience (FYE) Office are unsure if/how they are expected to use the survey results in their day-to-day work.

The Client: Director, First-Year Experience (FYE) Office

The Ask: Facilitate a one-hour training during a divisional all-staff meeting on the importance of holistic student support and how to use the ISSAQ Student Survey to support student success, particularly in advising/coaching relationships.

Context

- While the FYE director is a strong advocate for holistic student support and believes in the utility ISSAQ noncognitive data, college leadership have demonstrated only nominal support for the FYE office’s ISSAQ initiatives in the past.

- The Division of Student Success all-staff meeting will be attended by both FYE and non-FYE staff, however, only the former are expected to use ISSAQ survey data in their day-to-day work.

- The FYE director is enthusiastic about ISSAQ, but unclear on how it will be used. Hesitant to make anything mandatory. Expectations stated, but no method of accountability.

- Each year since ISSAQ was adopted, optional ISSAQ training workshops have been offered during Faculty Development Week, with minimal attendance. The feedback from these workshops suggests faculty/staff believe they don’t have time to integrate another tool into their workflow.

- Stated expectations differ from day-to-day reality. Holistic support expected, but advising meetings historically and logistically set up to focus on course selection. Students expected to come to advisors for assistance. Case loads to large to allow for proactive outreach or personalized support.

- Differing definitions of “holistic student support” across the college and even within the division.

- The FYE office is comprised of full-time advisors, part-time faculty coaches, and peer tutors. However, these roles have overlapping responsibilities

Plan/Solution

Step 1: Clarify client goals

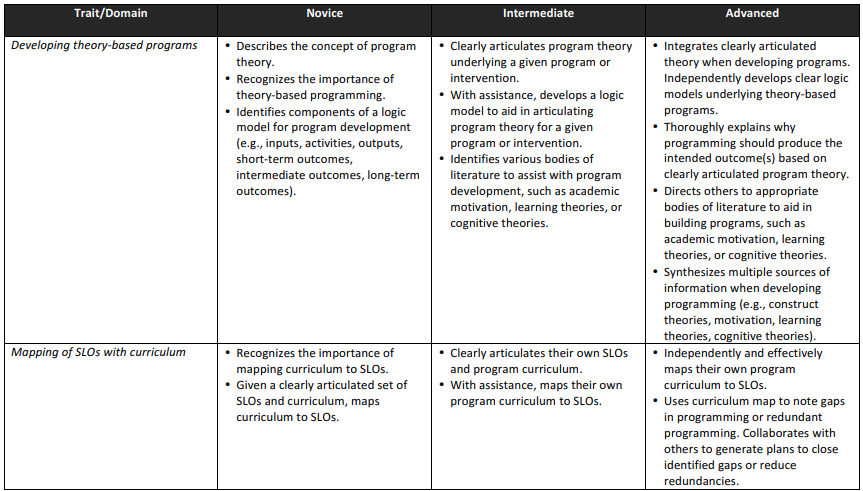

A rubric was developed to articulate the knowledge, skills, and attitudes needed to engage in quality assessment practice, as well as the learning progression in these skills from novice to advanced.

Step 2: Assess institutional readiness

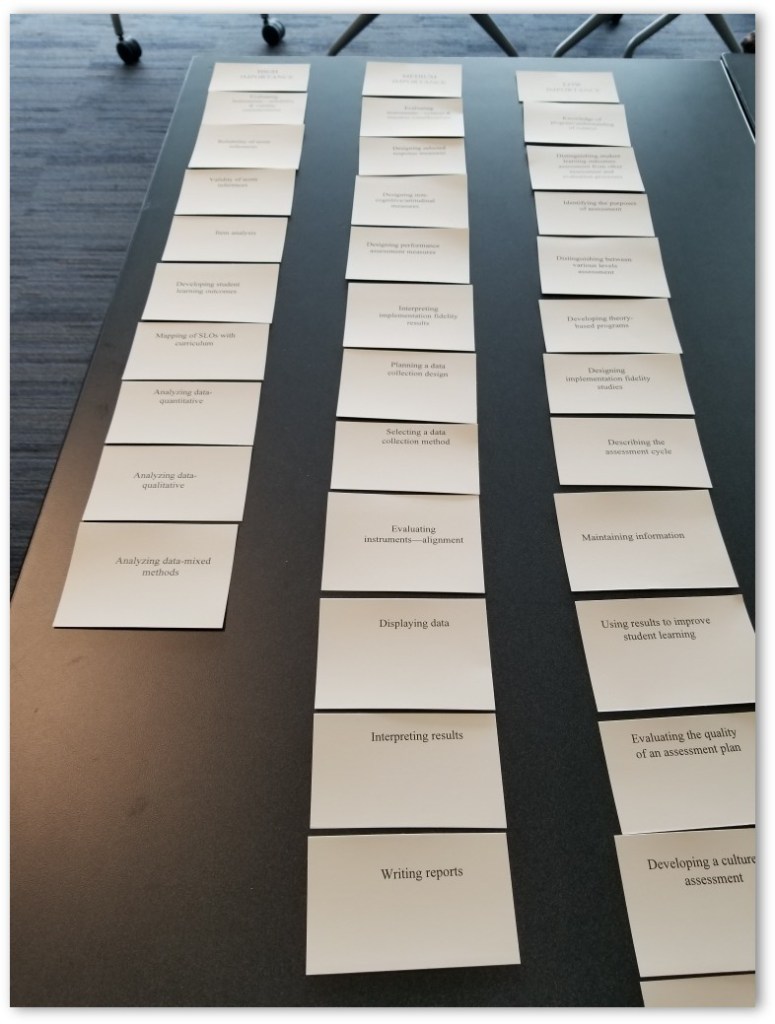

SMEs within CSAA and the Division of Student Affairs engaged in a facilitated card sort activity to rank the importance of each rubric skill for effective student affairs practice at CU.

Step 3: Conduct task analysis

SMEs mapped existing assessment trainings and resources to rubric skills to identify critical gaps (high importance skills, little/no training) and inefficiencies (low importance skills, abundant training).

Process Highlights

Step 1: Determine necessary skills

(Skill Area 2: Create and Map Programming to Outcomes)

- Relied heavily on professional standards, peer-reviewed research, and a review of CSAA training documents to create a strong first draft (thus reducing the number of feedback iterations and protecting SMEs’ time).

- Sought feedback from CSAA faculty, student affairs leadership, and select student affairs professionals across the Division.

- Solicited high-level feedback via survey and more detailed feedback through follow-up interviews to streamline the process.

Step 2: Prioritize skills by importance

- Facilitated an in-person card sort activity with key stakeholders within CSAA and across the Division of Student Affairs. Workshop participants were asked to…

- Review the Assessment Skills Framework (rubric)

- Organize traits/domains into high, moderate, and low importance categories.

- Of the high importance traits/domains, rank from most to least important.

- Debrief about card sort process and discuss rankings.

- Feedback solicited from less critical stakeholders via an online survey version of the card sort activity.

- Results compiled and final rankings shared with key stakeholders for approval.

Step 3: Identify critical training gaps

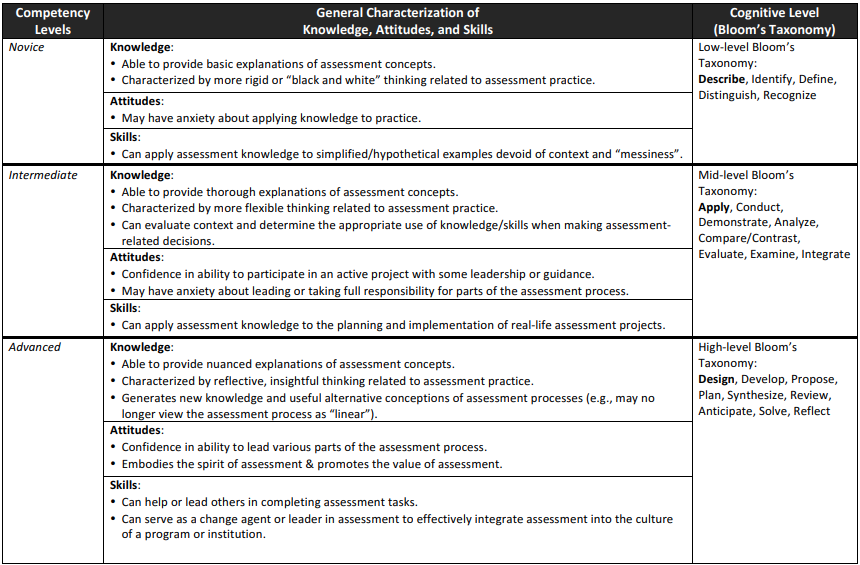

(Competency Level Overview)

- Compiled an inventory of existing CU trainings and resources through an extensive document/website review and follow-up SME interviews. To assist with identifying training gaps/inefficiencies, the following information was gathered for each offering:

- Cost (to individual, CSAA, and/or the Division)

- Timing/frequency

- Mode of delivery (e.g., in person, video, handout)

- Intended audience (e.g., incoming skill level, level of seniority, office/role targeted)

- Reach (how many learners use/benefit each year)

- Coordinated with CSAA leadership to budget time during yearly team retreat to gather information about the skill coverage of existing CSAA trainings and resources. Faculty were asked to respond to the following questions:

- What skills/attitudes are targeted by the training/resource?

- (Training Only) What competency level can be participants be reasonably expected to achieve by the end of the training?

Key Results

What we found: While there was ample assessment training aimed at getting student affairs professionals to the novice level in most skills (i.e., able to apply their assessment knowledge to simplified or hypothetical examples), there was no training to support professionals in progressing to the intermediate level (able to apply their knowledge to real-life assessment projects).

Additionally, offerings that allowed for hands-on practice were provided infrequently and at high cost (financial/time) to individuals and the Division.

Next steps: A plan was developed to institute a cohort-based training model where learners meet regularly over the course of a year to design and implement real-life assessment projects within their offices while receiving timely, personalized instruction and feedback from CARS faculty/graduate students.

Lessons Learned

Implementation Successes

- High SME engagement and satisfaction with the process. Clear communication (and negotiation) with SMEs in advance about their roles during each phase and the anticipated time commitment facilitated a smoother process and enabled SMEs to budget sufficient time to engage thoughtfully.

- Strategic inclusion of diverse stakeholder voices. A diversity of stakeholders (from CARS experts and divisional leaders to on-the-ground student support staff) were strategically consulted at different points in the process based on when/where their feedback would be most valuable.

- Identification of “sneaky” training gaps. Gathering detailed information on existing training opportunities (e.g., cost, timing, reach) made it possible to identify instances where training existed, but was inaccessible (or even unknown) to those who needed it most.

Improvement/Growth Opportunities

- Greater focus on actual (vs. desired) assessment tasks. Rather than focusing exclusively on what student affairs professionals should do with respect to assessment, a greater understanding of their actual day-to-day assessment tasks would have allowed for the design of learning solutions that could be more readily integrated into existing workflows and covered knowledge/skills more easily transferred to the workplace.

- Reducing burden on SMEs. The cognitive/time burden on CARS faculty could have been further reduced by requesting they provide targeted feedback on only a subset of the skills in the Assessment Skills Framework (those most closely related to their areas of expertise).